When I started writing the MVP for my current startup, I chose Ruby on Rails out of familiarity. I will also confess to harboring a secret Lord of the Rings vision of a single programming language to rule them all: Ruby for web (RoR), distributed systems development (JRuby), automation (Chef), and testing (Rspec/Cucumber). In those early days, my choices seemed to have little downside, and other than raising an occasional eyebrow at @dhh tweets, I was generally happy with Rails.

My first doubts started to arise when I set out to hire a couple Rails engineers. To my surprise, while Boston has plenty of software developers with Rails experience (757 to be exact), the talent density in the community is… how do I say this?... low. I am not saying there are not great Rails developers in Boston (there are). I’m also not saying there are not great developers in Boston who have previously used Rails (there are). But a surprisingly high percentage of the developers claiming Rails experience on their resumes are not what you would call "A players."

To further complicate matters, the more my newly hired team drove toward a Single Page App that leveraged REST endpoints, the less the prescriptive approach of Rails supported us.

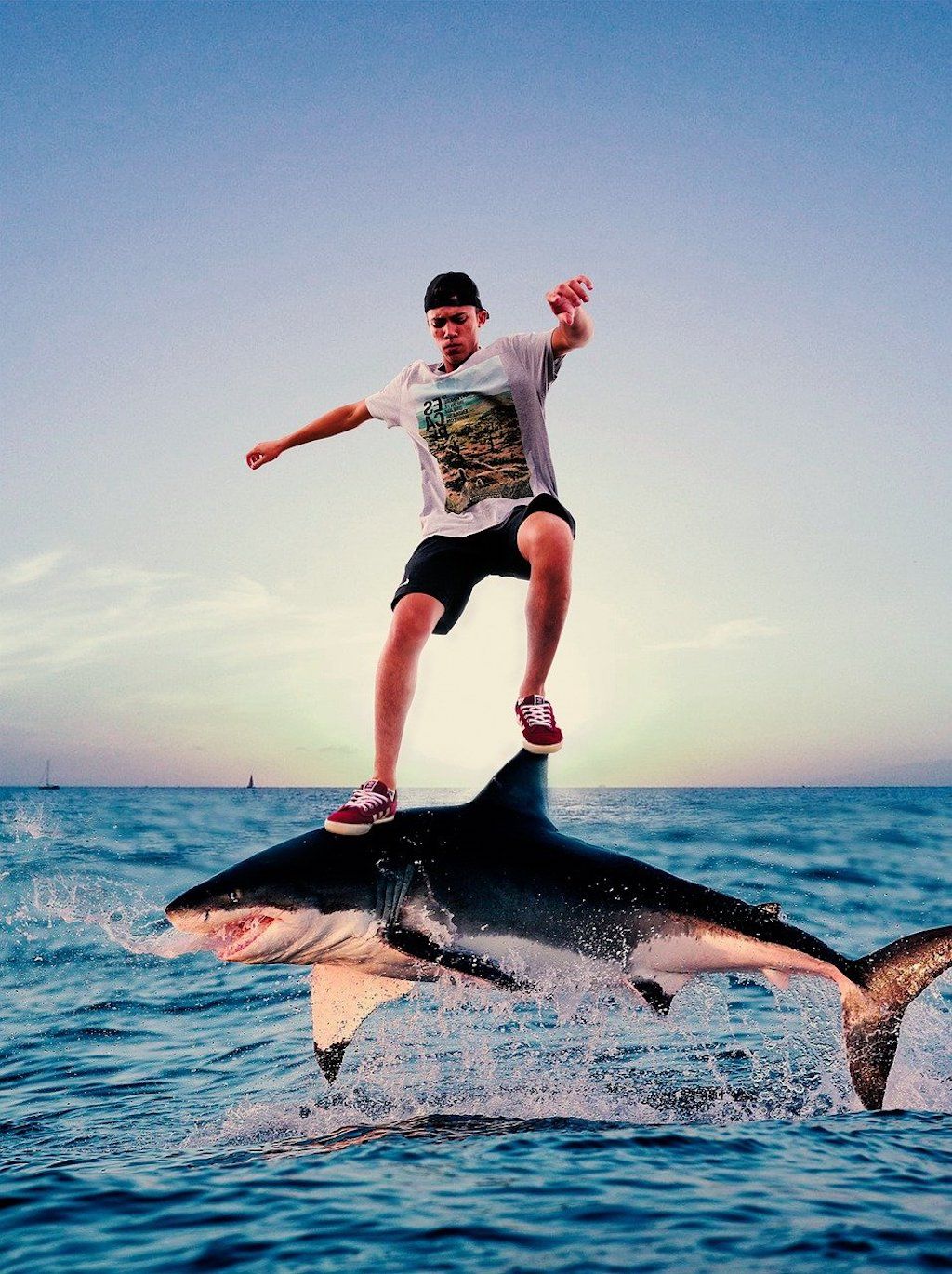

So here are my top reasons for why Rails jumped the shark (2010 with 3.0?):

- The talent density in any technical community naturally declines with age.

- Web development has steadily been moving from heavy server-side (e.g. Rails) to lighter SPA (e.g. Angular) frameworks.

- Core tenets Rails promoted / pioneered (e.g. CoC, DRY) have long since gone mainstream.

- There are many choices available today for web development (e.g. Node.js) that were not available when Rails hit mainstream in 2007.

- As with any successful framework, with age comes bloat.

- Sometimes its nice to have your framework come without a side of opinion.

Rails was a great framework for its time. But all good things must come to an end.

So goodbye Rails. Hello... Node? ;)